Somatosensory representation of limb state during reaching

Representation of reaching in the cuneate nucleus

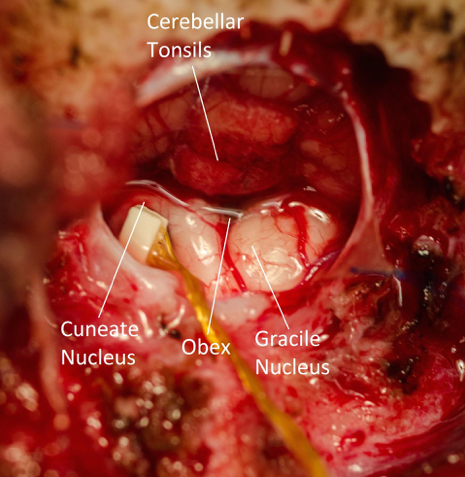

A crucial component of understanding how we are able to perform complex feedback-controlled reaching and grasping tasks is the characterization of how proprioceptive data are transformed from simple force and length sensors in the muscles to more complex multimodal representations of limb state. Although the gracile and cuneate nuclei that connect the periphery to cortex via the thalamus were discovered in the early days of neurophysiology, little is known about how they represent proprioceptive information. Through the use of multi-electrode arrays, which have been used in cortical areas for several decades, we are beginning to investigate how the representation of proprioception in the cuneate nucleus differs from that of the higher areas of the brain. Cuneate neurons may encode the length and tension of muscles, rather than higher-level variables such as hand position. We don't know whether the mixture of individual length and force modalities, encoded by muscle spindles and Golgi tendon organs respectively, occurs at this level. Answering these questions will provide important context for our understanding of how proprioception is represented at higher cortical areas in the brain and how it is used to generate well-controlled movements.

↓ Jump to References

Representation of motion and force in somatosensory cortical area 2

When we reach toward an object, we think in terms of where our hands are and how they move in the space around us. This "extrinsic" coordinate is distinct from a system of "intrinsic coordinates" that defines limb state in terms of muscle lengths or forces, signals that are sensed by muscle spindles and Golgi tendon organs. At a conscious level, we experience a mixed, transformed version of these sensor outputs. Where and how this transformation from muscle-based signals to our conscious, hand-based representation occurs is unknown. In addition to the cuneate nucleus, we are studying these representations in primary somatosensory cortex (S1), an area of cerebral cortex that processes both cutaneous and muscle signals from the arms. We use multi-electrode arrays, chronically implanted in S1, to record the activity of many neurons at the same time, as the monkey makes reaching movements.

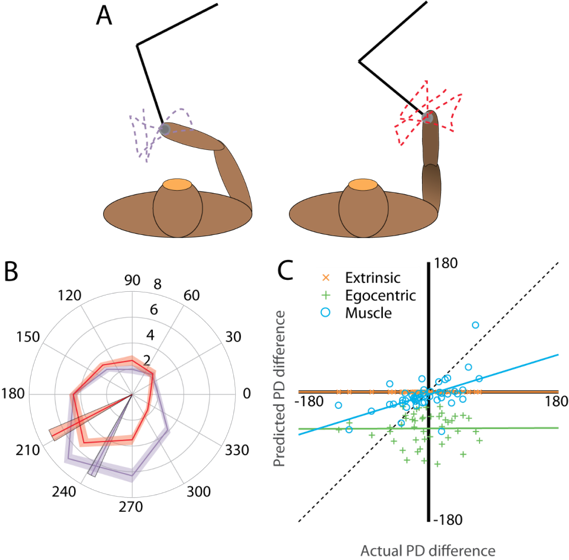

The classic model for proprioception in S1, like our conscious perception [1], assumes the hand-based representation, where the firing rates of neurons are determined by the movement of the hand in space. A "preferred direction" (PD) indicates the direction of hand motion for a neuron that results in maximum firing rate. Lesser activity occurs when the hand moves in different directions. However, this assumption of a hand-based model has not been directly tested. Within a small working area, the hand-based model and a muscle-based model are similarly effective at describing neural firing, as hand movements and muscle lengths are highly correlated within small ranges. To break this correlation, we collect data as the monkey makes reaching movements in two distinct workspaces. In these two different postures, the hand-based model and the muscle-based model make significantly different predictions in terms of how the neurons behave. In testing how the neurons actually behave, in comparison with these predictions, we can draw conclusions about the amount of coordinate transformation that has occurred by the time proprioceptive signals have reached S1.

Using visual motion tracking and musculoskeletal modeling, we can estimate the individual muscle lengths in a monkey's arm as it reaches. We then examine how well muscle-based and hand-based models explain neural recordings made in S1. One method for this is to examine how much each model predicts neural PDs to change compared to how much the PDs actually change. Our preliminary results show that the hand-based model does not predict any PD change at all (see Fig 2). In contrast, the muscle-based model more accurately predicts the change in PD across the workspaces. This finding suggests that area 2 may still represent arm movements in an intrinsic, muscle-based coordinate frame; the transformation to extrinsic coordinates has not yet occurred.

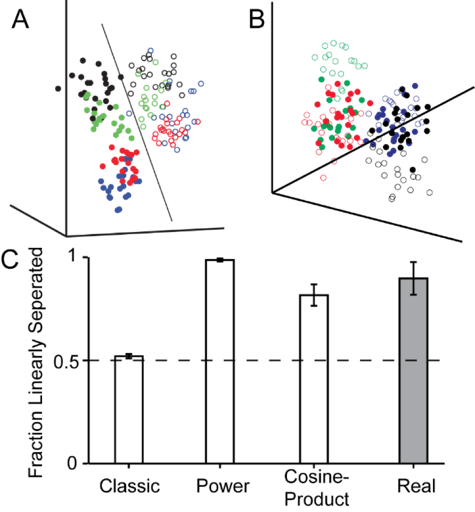

Another thrust of this project is to explore how the submodalities of movement and force are mixed together at the level of S1. Our initial tests involved an experiment in which a monkey either made active reaching movements or had its hand passively bumped towards the target. We specifically examined the population activity of these S1 neurons by constructing a high-dimensional neural space, where each dimension represented the activity of one neuron during a movement. Interestingly, in this space, the population activity corresponding to movements could be linearly separated into active and passive movements. Even more surprisingly, when we simulate neurons using the classic model for proprioception, we could not replicate this separation (see Fig 3). Clearly, the classic model is missing a key element to explain this phenomenon. We find, however, that when we add a nonlinear mixing between force and movement, which is not present in the classic model, we can once again separate active and passive movements. One clear example of such a nonlinearity is the power exerted by the monkey on the handle during movement, which is positive during active reaches and negative during passive bumps. However, we do find that other nonlinear mixing terms are sufficient. This suggests that, at some point prior to area 2, a nonlinear mixture of force and movement is introduced. In this part of the project, we have two goals, one top down and the other bottom up: in the first, we intend to search for and characterize this nonlinear mixing at a single neuron level in S1. In the second, we will use modeling of the musculoskeletal system and the muscle sensors to determine whether the nonlinearity arises from biomechanics of the arm or the physical properties of the sensors or whether it is added by the central nervous system through neural processing.

References

[1] Prud'homme, M. J., & Kalaska, J. F. (1994). Proprioceptive activity in primate primary somatosensory cortex during active arm reaching movements. Journal of Neurophysiology, 72(5), 2280-301. Retrieved from http://www.ncbi.nlm.nih.gov/pubmed/7884459